Anthropic CEO Warns AI Could Let One Person Command a Drone Swarm

Check out the Best Deals on Amazon for DJI Drones today!

The Chinese PLA demonstrated what a single operator directing 200 fixed-wing drones looks like in January. Anthropic CEO Dario Amodei is now warning that with AI, that number could scale by orders of magnitude — and the operator count could drop to one. The Defense Post’s Military AI publication reports that Amodei has sounded the alarm about AI enabling hyper-centralized military command structures, where tiny groups — or a single leader — could direct enormous weapons networks without the human accountability chains that exist inside today’s militaries.

- The Warning: Anthropic CEO Dario Amodei says misused or poorly governed AI could allow a single individual to direct large-scale drone weapons networks — bypassing the legal and ethical oversight built into conventional military structures.

- The Gap: Today’s militaries distribute command authority across human operators bound by law; AI-enabled swarm control collapses that distribution into one node.

- The Backdrop: China’s PLA already demonstrated 200-drone single-operator control in early 2026, and SpaceX is competing in a Pentagon voice-controlled swarm contest.

- The Stakes: Without governance frameworks to match the technology, the infrastructure for autonomous mass-casualty drone warfare could end up in the wrong hands — state or non-state.

Amodei’s Warning Lands in a Specific Technological Context

Dario Amodei’s concern is not abstract. He is describing a concrete capability trajectory: as AI systems become capable of autonomous coordination across large drone networks, the human-to-weapon ratio inverts. Instead of many operators managing a few assets, one operator manages thousands. Amodei’s argument, consistent with Anthropic’s published safety research, is that this inversion collapses accountability in ways that current international law and military doctrine are not built to handle. The person giving the order and the weapon executing it are separated by layers of AI decision-making — layers that courts, commanders, and conventions of war were never designed to audit.

This is not a theoretical future. We covered in January how China’s PLA broadcast footage of a single soldier directing 200 fixed-wing drones launched simultaneously from multiple vehicles — a test conducted by the National University of Defence Technology that revealed what the Pentagon has been calling its worst nightmare. The jump from 200 drones to 2,000, or 20,000, is an engineering and software problem, not a conceptual one. The AI required to manage that scale already exists in prototype form.

Centralized Control Is the Real Threat, Not Just the Drones

The danger Amodei identifies is specifically about power concentration: AI-enabled drone warfare does not just make attacks cheaper and faster, it makes them something a very small group can execute unilaterally. Today, launching a military drone campaign requires logistics chains, legal authorization, and command hierarchies — all of which create friction and accountability. AI removes most of that friction. A single actor with access to the right AI platform and a drone arsenal could, in principle, execute operations that currently require battalion-level resources.

We reported in February on how a soldier with $300 FPV goggles and a $500 drone can destroy a $5 million tank. That math is already brutal. Now extend it: an AI system that autonomously coordinates hundreds of such drones, guided by a single person at a laptop. The barrier to mass-casualty weapons drops from nation-state budgets to well-funded non-state actors, criminal organizations, or authoritarian leaders running outside conventional military structures.

The cartel angle is not hypothetical either. We reported in March on how Mexico’s CJNG was deploying drones and AI tools as part of its operational arsenal. The same AI-swarm architecture Amodei warns about at the state level has non-state precedents already in the field.

The Pentagon and Its Allies Are Racing Toward the Same Capability

The uncomfortable reality in Amodei’s warning is that the United States is actively building the technology he describes as dangerous. SpaceX is competing in a secret Pentagon challenge to build voice-controlled, autonomous drone swarming systems. The U.S. Army’s Purpose Built Attritable Systems program is pushing toward mass drone acquisition. The Pentagon’s Defense Innovation Unit published a solicitation for containerized drone launchers that can store, launch, and recover swarms on command.

The strategic logic is sound: if China’s PLA is doing this, the U.S. has to match it. Eric Schmidt’s AI drone program in Ukraine hit over a 70% kill rate — that’s the kind of result that makes the technology irresistible to defense planners regardless of what ethics frameworks say. A NATO Hedgehog exercise showed 10 Ukrainian drone operators wiping out two simulated battalions in a single day. Any military watching that won’t slow down — they’ll accelerate.

What Amodei is really arguing is that acceleration without governance creates a window of extreme risk. The New York Times editorial board warned in December 2025 that AI drone swarms capable of hunting and killing autonomously represent a category of weapon the U.S. military has not yet figured out how to govern — or defend against. Binding international agreements on autonomous weapons have been debated through the UN’s Convention on Certain Conventional Weapons process for over a decade with no treaty to show for it. The technology is not waiting for the diplomats.

China’s DeepSeek Integration Makes the Timeline Shorter

China’s PLA has already integrated DeepSeek AI into drone swarms and robot dogs, according to a Reuters investigation we covered in October 2025. That integration is the kind of development Amodei is warning about: capable AI married to autonomous weapons, deployed at scale, under centralized command. Amodei has separately argued that U.S. chip export controls on China matter more than ever — because the compute advantage is the only structural brake on how fast China can scale these systems.

The timeline is what matters here. If AI-enabled swarm control reaches operational maturity before international governance frameworks exist, the window for establishing norms closes. The technology is not waiting for the diplomats.

DroneXL’s Take

Amodei is one of the few people in the AI industry who makes the specific argument rather than gesturing at vague risk. The framing is precise: the danger isn’t AI drones in general. It’s that AI removes the distributed human accountability that makes military force governable. That’s a real structural shift, and it’s worth saying plainly.

I’ve been covering this space long enough to remember when “autonomous drone” meant a pre-programmed waypoint flight. What’s happening now — single operators running hundreds of AI-coordinated platforms, voice command swarms, kill rates above 70% — is a different category of technology. The China PLA footage I watched in January was a turning point. That wasn’t a demo. That was a capability signal.

The governance gap is real. Within six months, expect at least one Western government to propose a formal threshold — a maximum drone-to-operator ratio — as the basis for autonomous weapons regulation. It won’t be binding, and it won’t stop China or non-state actors. But it will be the first attempt to put a number on what Amodei is describing, and that matters for how the next generation of drone warfare doctrine gets written.

The deeper problem is that the U.S. can’t have it both ways. You can’t build the SpaceX voice-command swarm system, fund the 70% kill-rate AI drone program, and simultaneously argue for global governance of the same technology. That contradiction needs to be addressed openly — not buried in classified acquisition documents.

Editorial Note: AI tools were used to assist with research and archive retrieval for this article. All reporting, analysis, and editorial perspectives are by Haye Kesteloo.

Discover more from DroneXL.co

Subscribe to get the latest posts sent to your email.

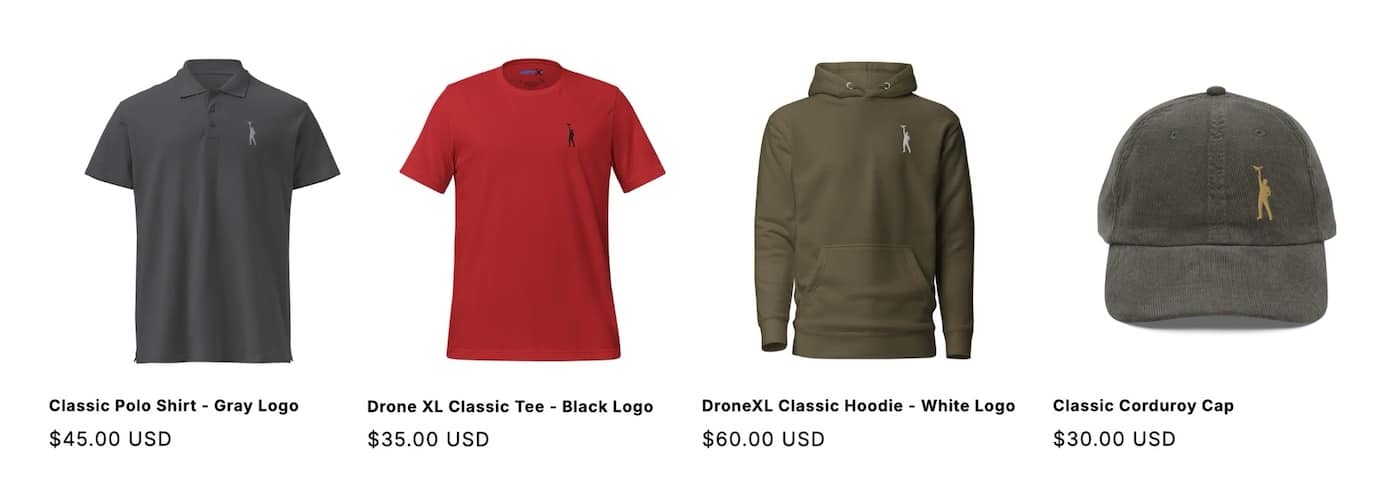

Check out our Classic Line of T-Shirts, Polos, Hoodies and more in our new store today!

MAKE YOUR VOICE HEARD

Proposed legislation threatens your ability to use drones for fun, work, and safety. The Drone Advocacy Alliance is fighting to ensure your voice is heard in these critical policy discussions.Join us and tell your elected officials to protect your right to fly.

Get your Part 107 Certificate

Pass the Part 107 test and take to the skies with the Pilot Institute. We have helped thousands of people become airplane and commercial drone pilots. Our courses are designed by industry experts to help you pass FAA tests and achieve your dreams.

Copyright © DroneXL.co 2026. All rights reserved. The content, images, and intellectual property on this website are protected by copyright law. Reproduction or distribution of any material without prior written permission from DroneXL.co is strictly prohibited. For permissions and inquiries, please contact us first. DroneXL.co is a proud partner of the Drone Advocacy Alliance. Be sure to check out DroneXL's sister site, EVXL.co, for all the latest news on electric vehicles.

FTC: DroneXL.co is an Amazon Associate and uses affiliate links that can generate income from qualifying purchases. We do not sell, share, rent out, or spam your email.

The AI Genie is already out and the problem with Amosei’s argument is that the world’s bad actors and rogue countries could care less about his or any moral code. Does he really think if we are bound by some AI moral code that our enemies will do the same?

Do you think Iran’s leader would agree with Amosei?