Fedorov’s Gamble: Can Open-Source Battlefield Data Defeat Russian Jamming?

Check out the Best Deals on Amazon for DJI Drones today!

The footage is brutal and specific: FPV drones diving on vehicles as drivers swerve, soldiers leaping from trenches and hurling objects at incoming munitions. I’ve watched hundreds of these clips over the past three years of covering this war. Now Ukraine’s Defense Ministry is turning that entire archive — millions of videos — into a training dataset for artificial intelligence targeting systems.

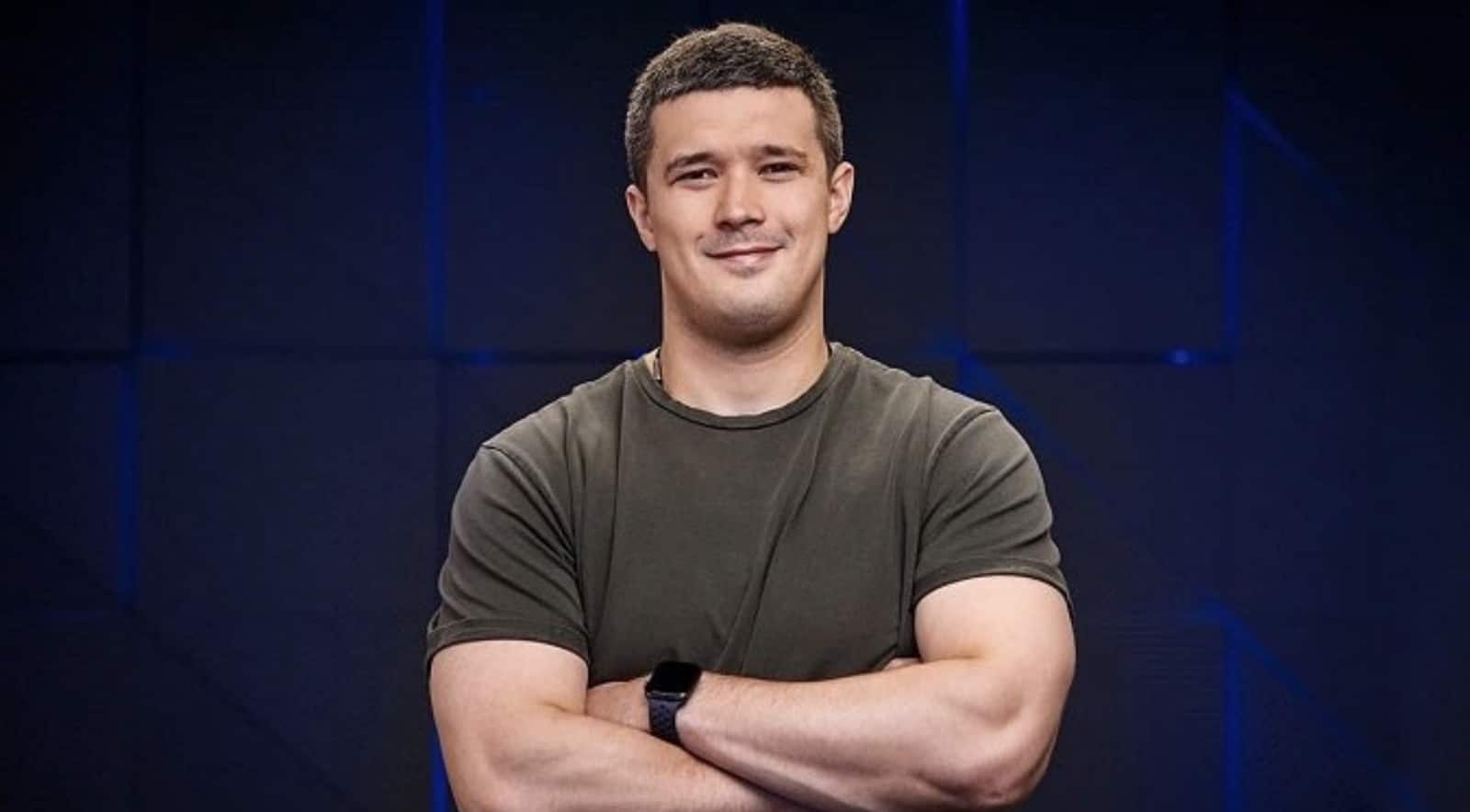

- The Development: Ukraine’s Defense Minister Mykhailo Fedorov announced Thursday that the military will give Ukrainian companies and allied-nation firms access to millions of drone videos and battlefield data to train AI models for automated targeting.

- The Scope: The dataset includes drone strike footage, surveillance video, and recordings of targets taking evasive action — exactly the edge cases AI targeting systems need to handle in real combat.

- The Constraint: Companies can train on the data but cannot take possession of the videos. A Ministry of Defense innovation center will manage the datasets.

- The Opposition: The International Committee of the Red Cross has opposed lethal autonomous systems that operate without meaningful human control over targeting decisions, and this announcement puts Ukraine’s program under direct scrutiny.

Fedorov’s Argument: AI Is a Warfighting Necessity, Not a Choice

Ukraine’s Defense Minister Mykhailo Fedorov, appointed to the role in January 2026 to accelerate the country’s drone and technology programs, framed the decision as a direct competitive response to Russia. According to the New York Times, Fedorov said “we must outperform Russia in every technological cycle” and called artificial intelligence “one of the key arenas of this competition.” The data will not leave Ministry of Defense control, but partnered companies can train their models against it — a managed access model that tries to balance operational security with faster AI development.

Ukraine has already used the same data internally. Its Delta battlefield command system — the same system NATO officers had to scramble to learn during the Hedgehog 2025 exercise — has been trained on this video archive. Opening it to allied-nation companies is a significant expansion of scope.

Why Jamming Makes AI Targeting So Attractive

Electronic jamming has become one of the defining tactical problems of this war. Units mounted on vehicles or carried by individual soldiers cut the radio link between pilot and drone, forcing the aircraft to crash blind or circle until the battery dies. AI-guided targeting removes the radio dependency entirely: the drone locks on a recognized target and prosecutes the attack without an active link to the pilot.

Fiber optic-tethered drones are one partial answer to jamming, but they’re expensive, slow to deploy, and physically limited by spool length. AI autonomy is the other path — and it scales. Both Russia and Ukraine have been testing systems that autonomously recognize armored vehicles and personnel. The videos Ukraine is now releasing show exactly the problem these systems need to solve: what does a target do in the final two seconds before impact, and how should the drone react?

Ukraine’s FirePoint operation is already producing 200 long-range deep-strike UAVs per day across seven navigation generations, most of them GPS-independent. These are cruise-missile-class platforms, not FPV drones — but feeding AI targeting into that production pipeline changes what they can do at scale, particularly against hardened or mobile targets.

The Red Cross Objection and Ukraine’s Counter

The International Committee of the Red Cross has consistently opposed lethal autonomous systems that remove meaningful human control from targeting decisions, arguing this violates the principles of distinction and proportionality under international humanitarian law. The ICRC’s objection is specific: it isn’t opposed to AI involvement in warfare, but to systems where no human makes a genuine judgment before a person is killed. Ukraine’s position is that humans retain final authority over lethal force decisions, even as AI handles target recognition and drone maneuvering.

This is not new territory for Kyiv. Back in June 2024, Ukraine’s deputy tech minister Alex Bornyakov unveiled a prototype AI-identification drone and acknowledged the ethical tension directly. The difference now is scale and institutional commitment — this is no longer a prototype announcement, it’s a Ministry-level data policy.

Supporters of AI targeting systems make a practical counter-argument: precision guidance can reduce civilian casualties by shrinking the blast radius of engagement decisions. Firing artillery at a grid coordinate is, in their view, less discriminating than a drone that can visually confirm a military vehicle before striking.

Drones Now Inflict More Casualties Than Any Other Weapon in This War

The context for this announcement matters. Drones have surpassed rifles, machine guns, tanks, artillery, and aerial bombs as the primary source of casualties for both Ukrainian and Russian forces. Ukraine’s Ministry of Defence reported that drones now account for more than 80% of confirmed enemy target destruction. That level of battlefield dominance means AI improvements to drone effectiveness have an outsized effect on the overall war.

Ukraine has also deployed armed ground robots and established the world’s first UGV battalion — AI-guided drones are one part of a broader push toward autonomous systems across every domain. The New York Times reports that Fedorov described autonomous systems as the defining feature of future warfare. That isn’t rhetorical. It describes active procurement and deployment policy.

DroneXL’s Take

This decision will get framed in some quarters as Ukraine crossing an ethical line. I’d push back on that framing — hard.

The Red Cross position on meaningful human control is principled and worth defending in peacetime negotiations. But Ukraine is fighting a war of survival against a country that is not waiting for international consensus on autonomous weapons. Russia is already fielding AI-assisted targeting. The New York Times editorial board warned last December that AI drone swarms capable of hunting and killing autonomously represent a genuine military revolution — and that the U.S. is already behind. Ukraine is not behind. Ukraine is building the doctrine in real time, under fire, and now offering its data to allies.

The “humans decide lethal force” assurance from Ukrainian officials is meaningful but worth watching carefully. There’s a difference between a human authorizing a mission and a human actually controlling the terminal phase of an attack. When AI handles target lock and impact maneuvering, the human role starts to look more like mission approval than kill decision. That distinction will matter a lot in the legal and ethical debates coming after this war ends.

The practical impact is clear. Fedorov was appointed defense minister specifically to accelerate this kind of technology integration. He’s moving fast. Expect allied-nation defense tech firms — particularly in the U.S., UK, and Baltic states — to sign data access agreements with the Ukrainian Ministry of Defense within six months. The training datasets from a live, high-intensity drone war are simply too valuable to pass up.

Source: New York Times (paywall), reporting by Andrew E. Kramer from Kyiv.

Editorial Note: AI tools were used to assist with research and archive retrieval for this article. All reporting, analysis, and editorial perspectives are by Haye Kesteloo.

Discover more from DroneXL.co

Subscribe to get the latest posts sent to your email.

Check out our Classic Line of T-Shirts, Polos, Hoodies and more in our new store today!

MAKE YOUR VOICE HEARD

Proposed legislation threatens your ability to use drones for fun, work, and safety. The Drone Advocacy Alliance is fighting to ensure your voice is heard in these critical policy discussions.Join us and tell your elected officials to protect your right to fly.

Get your Part 107 Certificate

Pass the Part 107 test and take to the skies with the Pilot Institute. We have helped thousands of people become airplane and commercial drone pilots. Our courses are designed by industry experts to help you pass FAA tests and achieve your dreams.

Copyright © DroneXL.co 2026. All rights reserved. The content, images, and intellectual property on this website are protected by copyright law. Reproduction or distribution of any material without prior written permission from DroneXL.co is strictly prohibited. For permissions and inquiries, please contact us first. DroneXL.co is a proud partner of the Drone Advocacy Alliance. Be sure to check out DroneXL's sister site, EVXL.co, for all the latest news on electric vehicles.

FTC: DroneXL.co is an Amazon Associate and uses affiliate links that can generate income from qualifying purchases. We do not sell, share, rent out, or spam your email.